Work zones sit at a difficult intersection of mobility and safety. Traffic needs to keep moving, but road crews work only a few feet from fast-moving vehicles, often protected by little more than cones and temporary signs. Edge-native artificial intelligence (AI) enables workers to have an always-alert digital lookout, monitoring for risky behavior in real time and helping agencies intervene before a close call becomes a serious crash.

Why Work Zones Need Smarter Eyes

Drivers do not always adjust their behavior when they enter a work zone. Speeding, last‑second lane changes, distraction, and impatience are common, particularly on busy corridors where work is carried out under time pressure. Traditional countermeasures—static signs, flashing beacons, and occasional patrol presence—can help, but they rely on drivers noticing and obeying warnings in the moment.

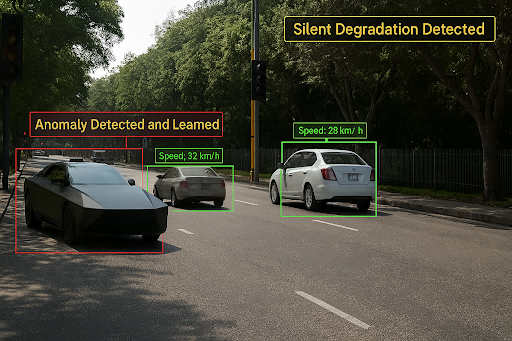

Edge AI changes that equation by placing intelligence on the devices that are already near the work zone: temporary cameras, trailers, smart cones, or poles. Instead of simply recording footage for later review, these devices process video and sensor data locally, detecting vehicles that are traveling too fast, veering too close to workers, or cutting across closed lanes. Because the models run on the edge rather than in a remote data center, the system can react in real time, even when connectivity is weak or non‑existent.

This local processing also allows the AI to adapt to the specific work zone it is monitoring. Conditions vary widely from site to site: a night‑time lane closure on a highway, a single‑lane alternating flow controlled by flaggers, or a short‑term utility trench on a neighborhood street. A self‑learning system can observe how traffic typically behaves at that location and time of day, then refine its thresholds to distinguish between normal, manageable variations and genuinely dangerous maneuvers.

How Edge AI Watches Over Crews

In a typical deployment, a trailer or mast equipped with cameras and sensors is placed at the approach to a work zone and at key points inside it. Edge AI models analyze the live feeds frame by frame, measuring vehicle speed, distance from workers, lane positions, and behaviors such as abrupt braking or weaving. When the system detects a high‑risk situation—like a speeding vehicle approaching a taper or a truck drifting into a protected area—it can trigger immediate alerts.

Those alerts might take several forms. On‑site, they can activate variable message signs, strobes, or audible warnings to get drivers’ attention in the moment. Inside the work zone, crew supervisors can receive notifications on tablets or radios, allowing them to move workers to safer positions or adjust traffic control. For serious violations, the system can capture evidence clips for later enforcement or driver education.

Over days and weeks, the self‑learning capabilities grow stronger. The AI learns which times of day see the most risky behavior, which approach angles produce more conflicts, and how changes in layout affect driver reactions. Agencies can use these insights to redesign work zone plans, schedule the most hazardous operations for safer time windows, or justify the use of additional protective equipment, such as crash cushions and barrier trucks.

Balancing Safety, Privacy, And Practicality

Any system that monitors drivers raises understandable questions about privacy and oversight. Edge‑native AI offers one practical safeguard: by processing data on‑site, it reduces the need to stream or store raw video centrally. Only short clips associated with verified high‑risk events or violations need to be retained for review, training, or enforcement. That design narrows the scope of data collection to what is strictly necessary for safety outcomes.

From a cost and operations standpoint, edge deployments fit the realities of many agencies. Work zones are temporary, budgets are constrained, and connectivity at highway sites is not always reliable. Devices that can be dropped in, powered locally, and operate autonomously without expensive networking infrastructure are easier to justify. They also scale: once the core AI stack is proven, the same approach can be replicated across many sites with modest incremental cost.

The promise of edge AI in work zones is not to replace human judgment but to support it. Crews and traffic engineers still decide where to place barriers, how to phase closures, and when to pause work. What changes is their visibility. Instead of learning about risky behavior only from crash reports or anecdotes, they gain a continuous, data‑driven view of how drivers actually behave around their sites.

If this technology delivers on its potential, a work zone will no longer be protected only by cones and signs. It will be watched over by a tireless, adaptive digital lookout—one that never gets distracted, never looks away, and is tuned specifically to the risks facing the crews on that stretch of road.