Across industries, artificial intelligence has become the secret ingredient in hiring — sorting résumés, evaluating interviews, and even predicting employee success. Companies eager to streamline recruitment often trust these algorithms to make unbiased judgments, replacing instinct with data. Yet beneath this promise lies a pressing question: can a system built by humans ever truly escape human bias?

Recent controversies have made that question uncomfortably real. When early hiring algorithms trained on historical data began favoring certain demographics, it became clear that bias doesn’t vanish in digital form; it mutates. Instead of a recruiter misjudging a candidate’s name or accent, an AI system might quietly filter out resumes based on patterns it learned from decades of discriminatory hiring. The result — smooth software, skewed outcomes.

Tech firms and regulators are racing to change that narrative. The conversation has evolved from “Can AI replace humans?” to “Can AI help humans make fairer choices?” That shift, subtle but crucial, sets the stage for where ethical AI might take hiring next.

Data That Mirrors Diversity

To create truly inclusive hiring systems, the foundation begins with data — diverse, transparent, and representative. An algorithm is only as fair as the examples it’s trained on. If those samples reflect gender imbalance or cultural bias, the machine simply replicates these blind spots at scale.

Leading technology companies are beginning to tackle this challenge by reengineering their data pipelines. Some now employ bias-auditing teams who monitor machine learning datasets for missing perspectives. Instead of relying exclusively on historical hiring outcomes, they’re integrating socioeconomic, geographic, and neurodiverse variables that better represent today’s workforce. The goal isn’t just neutrality but empathy — training AI to understand what diversity looks like in practice.

For corporations seeking to modernize recruitment, this approach signals a new ethical frontier. Inclusive data encourages algorithms to widen their definition of “qualified,” helping them recognize potential beyond typical credentials or universities. It’s a reminder that fairness in technology starts long before the coding begins.

Technology Meets Ethics in the Boardroom

The conversation about algorithmic bias is no longer confined to data scientists or ethicists; it now belongs in the executive suite. Forward-thinking leaders view ethical AI as part of governance, just as compliance and sustainability have become corporate pillars. When hiring decisions influence culture, innovation, and public image, the way those decisions are automated matters more than ever.

Boardrooms are beginning to view AI ethics as a strategic advantage. A transparent recruitment algorithm that explains its reasoning — rather than concealing it in a “black box” — fosters trust both inside and outside the company. Employees are more likely to engage with technology they understand, and job candidates are more willing to apply when they believe the system evaluates fairly. In an era of talent shortages, fairness becomes a competitive edge.

Just as sustainability practices define modern business credibility, ethical AI may soon become part of a firm’s social license to operate. Companies investing in these technologies aren’t only improving HR efficiency; they’re future-proofing their reputation in a world that values accountability.

Redefining Inclusion in the Age of Machines

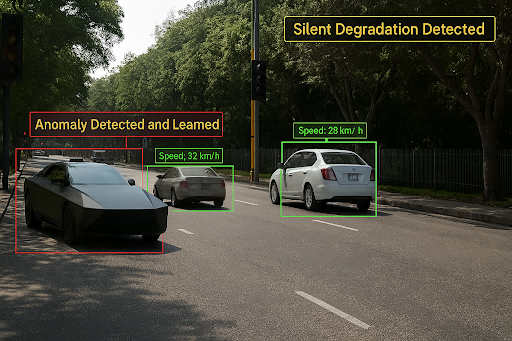

While fear of discrimination by algorithms still lingers, the emerging evidence shows that AI, when used thoughtfully, can be a force for equity rather than exclusion. Machine learning models equipped with bias-correction layers can help recruiters identify overlooked candidates. Sentiment analysis tools can flag phrasing in job descriptions that unintentionally discourages minority applicants. Predictive analytics can spotlight potential internal promotions based on ability instead of tenure.

These developments reveal something profound: fairness doesn’t happen by accident. It takes deliberate design, ethical oversight, and ongoing training to ensure technology reflects humanity at its best — not its worst. Each line of code becomes a silent statement about who deserves opportunity.

As the next wave of innovation unfolds, the intersection of AI and human values will define how societies build inclusion at scale. Instead of fearing automation, perhaps the challenge is to teach machines the moral intelligence that humans have long struggled to master. When technology aligns with empathy, hiring could become not only faster but fairer — transforming workplaces into examples of digital integrity.